As a school administrator for over 10 years, I have seen many ed tech companies come and go. When the pandemic hit, the market was flooded with options for educators to help reach every student. The challenge is, there are so many questions to consider as a leader before you purchase an ed tech product. How do we know they are any good? Will they still be around in a few years? What’s the cost/value of their product when it comes to learning?

Over the years, I curated a list of core questions that I would ask every ed tech company that made a pitch at me. These could be as basic as ‘will your software work with my device?’ as we were 1:1 iPads or ‘can we get some sort of discount for a long-term contract?’

There are hundreds, if not thousands, of ed tech companies out there vying for our attention and our pocketbooks. I’ve spent the last few years as an advisor to some in the hopes of not only making their product better for education. I also work with them to get a price point where schools can actually afford and maintain it over a long period of time. It’s from these lens of teacher, administrator, parent and ed tech advisor that I decided to partner with BAM Radio Network on my newest podcast: The Search: Putting Ed Tech Tools Under the Microscope.

The premise

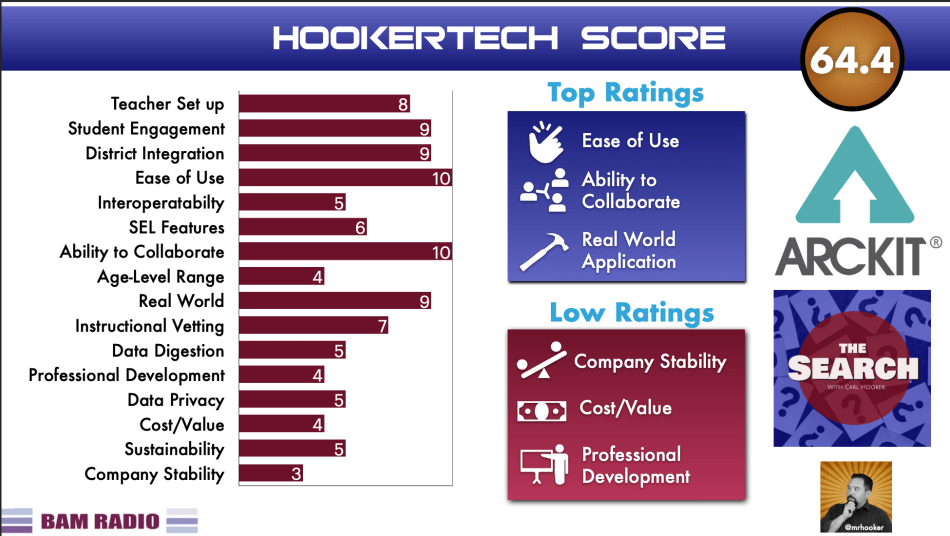

During each episode I’ll take on the role primarily of a teacher and administrator. I’ll be asking the companies questions about onboarding, “data digestion” and ease of set-up for the classroom teachers. All of these questions as well as some additional research into the company and platform will help inform my 17-point rating system known as the HookerTech Score. The data points from this score are largely based on a combination of ISTE standards, the Future Ready Framework, and my own personal points that over the years I felt held importance when making major financial/learning decisions for my district.

Here are the 17 points in more detail as well as their rating scale (in parenthesis):

Ease of Teacher Set-up (4-6)

Teachers are facing a time famine. Getting a new product to integrate takes time, professional learning, coordination with the curriculum, and ultimately prep time to introduce to the students. The more “turn key” ready platforms will achieve the highest rating (6) where one that requires a heavier lift to get started will get the lowest rating (4).

Student Engagement (3-7)

Student engagement can be a double-edged sword. I’ve seen some engaging online games that have little-to-no instructional value. I’ve also seen some great platforms that use high-end data science but are presented to the students in a non-engaging way. As there are more degrees as engagement, a platform seen as dull and formulaic might receive a 3, whereas a platform that is extremely engaging scores a 7.

Ease of District Integration (4-6)

How hard is it to get kids into the system? Does it require nightly uploads? Does it pair with a current single sign on (SSO) dashboard like ClassLink or Clever? The easier the integration the higher the score. If it takes a few weeks, several emails, phone calls, and back and forth finger pointing, the lower the score.

Ease of Student Use (4-6)

This one is a little different than engagement as it involves the number of clicks it takes for a student to use, interact, produce, and share within the platform. If it takes my students ten clicks to get to anywhere meaningful, then you get the low score.

Device interoperability (4-6)

While most products are now web-based, there are some that have vastly different experiences on a smart phone vs. a laptop. Some companies may claim “we work on all devices” but not deliver the same experience as a result of that statement.

SEL and Equity Features (3-7)

In the 21st century, we shouldn’t have tools that cater to only one demographic or group. Students have diverse backgrounds and learning styles. The tools we purchase should reflect those differences while also empowering each child on their learning journey. The higher rated platforms on this data point go out of their way to provide differentiated learning experiences, especially in those traditionally underserved communities.

Ability to Collaborate (4-6)

The majority of ed tech tools are bidirectional in terms of interaction. Meaning the student interacts with the platform and vice versa. The world we live in is much more omnidirectional. We interact with platforms but also collaborate with others. This particular data point takes the level of collaboration built into their platform into account. If there is no collaborative element, the company will receive the low mark of 4, while those that are built around collaboration will receive a 6.

Age-Level Range (4-6)

Put simply, what age groups are most ideal for a platform. If it’s only elementary or middle school or high school focused, the platform gets a 4. If it’s something that could be used in the K-12 or even K-16 environment, then it receives the top mark.

Topic Broadness (4-6)

Similar to the age-level rating, does the tool revolve around a specific topic or subject area? Or is it something that is evergreen across all subject areas and courses?

Real World Application (4-6)

How authentic is the application to real life? Is it an actual tool (like Gmail) that the students would use outside of school? Rarely would an ed tech specific tool garner the top ranking here, but some might mimic real world scenarios (posting/commenting online, coding an app, etc) that could give it higher marks.

Instructional vetting (4-6)

Companies create platforms to help kids learn and to make money. Sometimes, those two goals can be prioritized differently depending on the company. For this data point, I’m looking at how much input educators have on the platform itself. Are their current or former educators advising on its use and development? Or is it a group of programmers that developed an engaging tool but without a lot of instructional thought or insight?

Data “Digestion” (4-6)

Most companies provide some form of reporting metrics. These can be as robust as an interactive grid of customizable learning data points or as non-intuitive as a .csv file full of log-in counts. As mentioned before, time is very limiting for both teacher and administrator. The easier the data is to digest and act on, the more useful, and thus, the higher rating.

Professional Learning (4-6)

Does the company offer any type of onboarding and continual training? Is it online, in-person, or both? Offering additional training and following up with the end users on best practices can help a platform ‘stick’ in the classroom. My rating here varies from none offered (except maybe the occasional video) to full on staff training and continual follow-up user group support.

Data Privacy (4-6)

To be candid, this is a topic that is far from the minds of most administrators and probably all teachers. That said, as I mentioned in this recent article on cybersecurity, it’s something we can no longer dismiss. What data do companies have access to and who ‘owns’ it are just a couple of questions to consider for this data point.

Cost/Value (3-7)

For this data point, the highest rating would be a 7 for something that is “free”. However, we know that with free, comes some loss in functionality, possibly data privacy, or decrease in usability due to ads. On the other hand, some platforms demand dollar amounts over $20/student/year which no district can support long term. This one is a little easier to define as follows:

- 7 – Free/less than $1/student/year

- 6 – $1-$3/student/year

- 5- $3-$6/student/year

- 4 – $6-$15/student/year

- 5 – $15+/student/year

That said, some programs have a higher up-front cost but lesser yearly cost. Others give discounts based on long-term purchases which can drive down costs or further discounts based on different user amount thresholds.

Sustainability (4-6)

How long will this platform and company be around? If a tool is extremely impactful for all student learning, it’ll likely be of importance to a district. If it’s only used by a handful of students for a niche reason, it’s less likely to be around in a couple of years. Out of all the data points, this one is most affected by others like cost/value, professional learning, topic broadness, age-level range, and ease of use. All of these play a role into how sustainable a program can be in a district over time.

Company stability (3-7)

I used to work with a tech director who advised me (somewhat morbidly) “if you can lose the entire company in a car accident, it’s not that reliable a company.” Lots of start-ups in the ed tech space are lean when it comes to employees, which lends itself to a less than stable rating. Some have found a great middle ground and survived and thrived in the space for more than 4-5 years which increases their stability rating in this case. If we are spending the time to onboard it, train on it, and gather data with it, we want it to be around for a while, aka more stable.

Summary report

Taking all these data points and putting them in realistic ranges gives us the ability to create the company’s total HookerTech Score. While no company on the planet would likely receive a perfect score (106 for you math people out there) it’s equally unlikely that any company could receive the lowest score (64). Having tested this out on a couple of very different companies on the show already, my prediction is the rating will usually fall between 80-95ish. Here’s a sample report we did of our first recording with a company called Arckit so you can see how the data points work:

We will attach a similar report in the show notes of each episode. Do you have a company that you want us to put under the microscope? Or maybe you would like your platform to be put to the test? Either way, feel free to send me a message or comment below and we’ll try and get you on The Search!

Hi Carl! We would love to put our classroom management, instruction, and monitoring tool, classroom.cloud, under the “HookerTech” microscope! I’d love to see your thoughts and find out what we could improve upon (and what we’re doing well).